Summary

This chapter describes the configuration of SR-TE policies in a multi-domain environment. This includes regular policies (with node endpoint) and EPE policies. EPE-only policies don’t check the IGP topology so they work exactly the same way in single-domain and multi-domain topologies.

In order to calculate TE policies, Traffic Dictator must have a full view of the IGP topology. Therefore:

- TD must receive BGP-LS information about all IGP areas. Which means either TD must have a BGP-LS session with each ABR, or ABRs should be also BGP route reflectors and collect all BGP-LS information from all areas.

- In order to build a multi-domain policy, TD requires explicit path strict or loose to help it find the next ABR. Minimal configuration would just be explicit path loose to ABR loopback.

- When different IGP instances are connected by a BGP link, explicit path strict is required to navigate through the BGP links.

- When calculating TE policies, TD ignores readvertised prefixes and SID with “nophp” flag.

- TD always includes ABR prefix SID in segment list.

Allowed designs

Multi-level IS-IS / Multi-area OSPF

This is the most basic design with only one IGP instance which is split in multiple areas. For example:

In such designs, normally routes from L1/non-zero ara are leaked into L2/area 0 and SID are advertised with “nophp” flag. This allows for IP/MPLS reachability between endpoints even though they don’t have a full view of the IGP topology.

Multiple IGP instances

This is a more common design for large scale ISP, known as Seamless MPLS or Unified MPLS.

Normally there is no redistribution between instances, instead BGP-LU is used to connect across different IGP domains.

Similar considerations to the design with multi-area IGP apply here. In fact this is a recommended design because there is no risk associated with route leaking/redistribution and SPF caching works better here than in multi-area design so the performance on a large topology is better.

Note: TD must receive BGP-LS information from different instances with a different topology-id. If you misconfigure topology-id on routers, this will lead to a mess similar to what you get with duplicate router-id.

Mixing IS-IS and OSPF

This design is fine too. Make sure that IS-IS TE router-ID on ABR nodes is the same as their OSPF router-id.

Mixing IPv4-only and IPv6-only instances

This is also supported. ABRs must support both IPv4 and IPv6. In this topology, an IPv4 policy from topology 101 to topology 103 will first use IPv4 SID to get to the first ABR and then IPv6 SID to get to the second ABR, and then IPv4 again to get to the endpoint.

Connecting IGP domain by BGP links

This is supported too. Can be even multiple BGP links connecting different IGP domains. TD must receive BGP-LS information about IGP topology; BGP Peer SID (or BGP-LU EPE routes), and explicit path strict is required to navigate through the BGP links. The path is still calculated dynamically, TD will check BGP Peer SID and appy path constraints.

Simple multi-domain policy

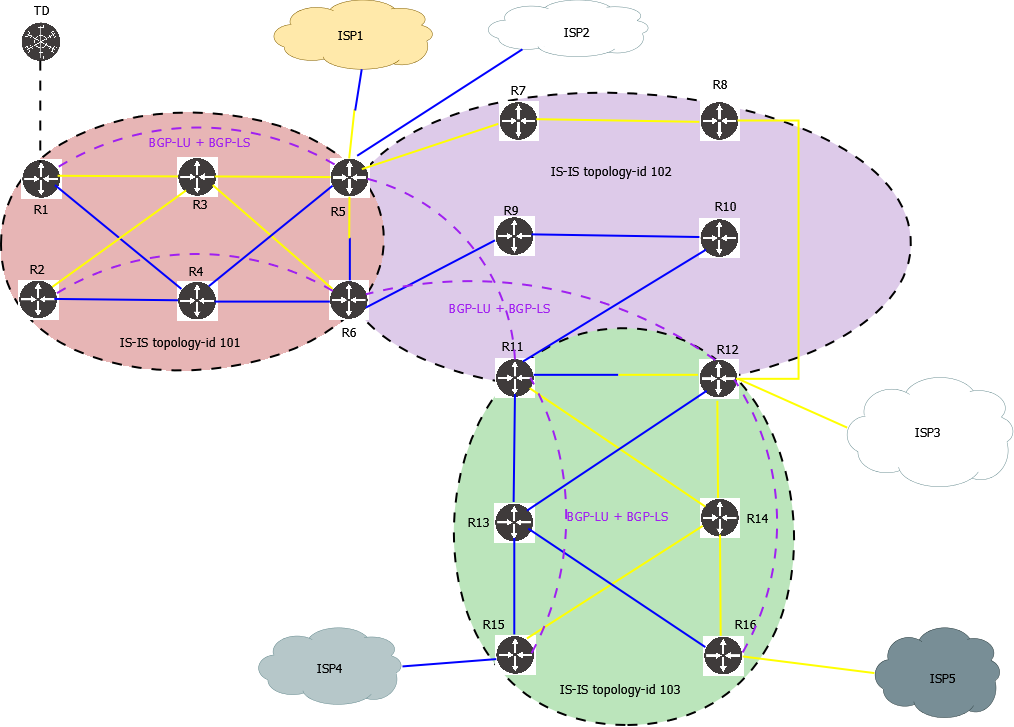

Consider the following topology:

This is classical Seamless MPLS. 3 IS-IS instances, connected by BGP-LU for end-to-end reachability. Routers connected to the ISP also allocate BGP Peer SID. The existing BGP-LU sessions are also used to distribute BGP-LS and BGP-SRTE routes, so there is no need to setup any additional BGP sessions between TD and different routers.

A simple policy to steer traffic from R1 to R11 using only blue links:

traffic-eng policies

!

policy R1_R11_BLUE_IPV4

headend 1.1.1.1 topology-id 101

endpoint 11.11.11.11 color 100

binding-sid 15003

priority 5 5

install direct srte 192.168.0.101

!

candidate-path preference 100

explicit-path R6_loose_IPV4

metric igp

affinity-set BLUE_ONLY

bandwidth 100 mbps

Explicit path:

traffic-eng explicit-paths

!

explicit-path R6_loose_IPV4

index 10 loose 6.6.6.6

6.6.6.6 is the loopback of R6, which is the ABR (participates in both topologies 101 and 102).

Check the policy:

TD1#show traffic-eng policy R1_R11_BLUE_IPV4 detail

Detailed traffic-eng policy information:

Traffic engineering policy "R1_R11_BLUE_IPV4"

Valid config, Active

Headend 1.1.1.1, topology-id 101, Maximum SID depth: 10

Endpoint 11.11.11.11, color 100

Endpoint type: Node, Topology-id: 102, Protocol: isis, Router-id: 0011.0011.0011.00

Setup priority: 5, Hold priority: 5

Reserved bandwidth bps: 100000000

Install direct, protocol srte, peer 192.168.0.101

Policy index: 5, SR-TE distinguisher: 16777221

Binding-SID: 15003

Candidate paths:

Candidate-path preference 100

Path config valid

Metric: igp

Path-option: explicit

Explicit path name: R6_loose_IPV4

Affinity-set: BLUE_ONLY

Constraint: include-all

List: ['BLUE']

Value: 0x1

This path is currently active

Calculation results:

Aggregate metric: 50

Topologies: ['101', '102']

Segment lists:

[16004, 16006, 16011]

Policy statistics:

Last config update: 2024-09-06 10:26:46,385

Last recalculation: 2024-09-06 10:28:36.836

Policy calculation took 0 miliseconds

This output shows that the policy traverses topologies 101 and 102, also node that SID list includes 16006 which is the R6 SID. TD will always use ABR SID in multi-domain policies.

Explicit path and mixing IPv4/IPv6

Let’s say there is a requirement to steer traffic from R1 to R15 using only blue links but without using affinity, just by using explicit path strict. Also topologies 101 and 103 are IPv4-only and topology 102 is IPv6-only.

Policy config:

traffic-eng policies

!

policy R1_R15_STRICT_MIXED

headend 1.1.1.1 topology-id 101

endpoint 15.15.15.15 color 104

binding-sid 15010

priority 7 7

install direct srte 192.168.0.101

!

candidate-path preference 100

explicit-path R4_R6_R9_R10_R11_R13_strict_MIXED

metric igp

bandwidth 100 mbps

Explicit path:

traffic-eng explicit-paths

!

explicit-path R4_R6_R9_R10_R11_R13_strict_MIXED

index 10 strict 10.100.2.4

index 20 strict 10.100.8.6

index 30 strict 2001:100:13::9

index 40 strict 2001:100:14::10

index 50 strict 2001:100:15::11

index 60 strict 10.100.17.13

Verify the policy:

TD1#show traffic-eng policy R1_R15_STRICT_MIXED detail

Detailed traffic-eng policy information:

Traffic engineering policy "R1_R15_STRICT_MIXED"

Valid config, Active

Headend 1.1.1.1, topology-id 101, Maximum SID depth: 10

Endpoint 15.15.15.15, color 104

Endpoint type: Node, Topology-id: 103, Protocol: isis, Router-id: 0015.0015.0015.00

Setup priority: 7, Hold priority: 7

Reserved bandwidth bps: 100000000

Install direct, protocol srte, peer 192.168.0.101

Policy index: 9, SR-TE distinguisher: 16777225

Binding-SID: 15010

Candidate paths:

Candidate-path preference 100

Path config valid

Metric: igp

Path-option: explicit

Explicit path name: R4_R6_R9_R10_R11_R13_strict_MIXED

Affinity-set: BLUE_ONLY

Constraint: include-all

List: ['BLUE']

Value: 0x1

This path is currently active

Calculation results:

Aggregate metric: 70

Topologies: ['101', '102', '103']

Segment lists:

[16004, 16006, 17011, 16013, 16015]

Policy statistics:

Last config update: 2024-09-06 10:26:46,385

Last recalculation: 2024-09-06 10:28:36.840

Policy calculation took 0 miliseconds

Note that TD does not use each SID from explicit path. It just uses SID that are required to steer traffic via the specified path, + ABR SID to connect different IGP domains.

Anycast SID for ABR

In real world design, this topology makes the most sense if the pairs of ABR announce an anycast SID. Let’s say R5-R6 announce prefix 56.56.56.56/32 and 2002::56/128; R11-R12 announce SID prefix 11.11.12.12/32 and 2002::1112/128.

As in the previous example, topologies 101 and 103 are IPv4-only and topology 102 is IPv6-only. In order to connect these 3 IGP instances using anycast SID and also use ECMP, configure the following policy:

traffic-eng policies

!

policy R1_R16_LOOSE_ANYCAST_MIXED

headend 1.1.1.1 topology-id 101

endpoint 16.16.16.16 color 112

binding-sid 15013

priority 7 7

install direct srte 192.168.0.101

!

candidate-path preference 100

explicit-path ANYCAST_MIXED

metric igp

bandwidth 100 mbps

Explicit path:

traffic-eng explicit-paths

!

explicit-path ANYCAST_MIXED

index 10 loose 56.56.56.56

index 20 loose 2002::1112

Verify the policy:

TD1#show traffic-eng policy R1_R16_LOOSE_ANYCAST_MIXED detail

Detailed traffic-eng policy information:

Traffic engineering policy "R1_R16_LOOSE_ANYCAST_MIXED"

Valid config, Active

Headend 1.1.1.1, topology-id 101, Maximum SID depth: 10

Endpoint 16.16.16.16, color 112

Endpoint type: Node, Topology-id: 103, Protocol: isis, Router-id: 0016.0016.0016.00

Setup priority: 7, Hold priority: 7

Reserved bandwidth bps: 100000000

Install direct, protocol srte, peer 192.168.0.101

Policy index: 12, SR-TE distinguisher: 16777228

Binding-SID: 15013

Candidate paths:

Candidate-path preference 100

Path config valid

Metric: igp

Path-option: explicit

Explicit path name: ANYCAST_MIXED

This path is currently active

Calculation results:

Aggregate metric: 70

Topologies: ['101', '102', '103']

Segment lists:

[16056, 17112, 16016]

Policy statistics:

Last config update: 2024-09-06 10:26:46,386

Last recalculation: 2024-09-06 10:28:36.842

Policy calculation took 1 miliseconds

Note how the policy uses the respective IPv4 and IPv6 anycast SID, thus achieving ECMP load sharing with only one segment list.

EPE and Null-endpoint policies

You can configure EPE policies in a multi-domain environment the same way as regular policies. One caveat pertains Null-endpoint policies: if you want the null-endpoint policy to route to a different topology, the local topology MUST NOT have any egress peer matching the constraints. If there is a suitable egress peer in the local topology, TD will try to build the path to this peer, and your explicit path is likely to fail if it points to another topology.

Bandwidth reservations

Bandwidth reservations work exactly the same as with regular policies. Check reserved bandwidth using “show topology id <>” command:

TD1#show topology id 101

---

ISIS links

0004.0004.0004.00 [E][L2][I101][N[c65002][b0][s0004.0004.0004.00]][R[c65002][b0][s0006.0006.0006.00]][L[i10.100.8.4][n10.100.8.6][i2001:100:8::4][n2001:100:8::6]]

IGP metric: 10

TE metric: 500

Affinity: 0x1

Max-bw: 10000000000

Unrsv-bw priority 0: 10000000000

Unrsv-bw priority 1: 10000000000

Unrsv-bw priority 2: 10000000000

Unrsv-bw priority 3: 10000000000

Unrsv-bw priority 4: 10000000000

Unrsv-bw priority 5: 9800000000

Unrsv-bw priority 6: 9800000000

Unrsv-bw priority 7: 9000000000

Policies priority 0: []

Policies priority 1: []

Policies priority 2: []

Policies priority 3: []

Policies priority 4: []

Policies priority 5: ['R1_R11_BLUE_IPV6', 'R1_R11_BLUE_IPV4']

Policies priority 6: []

Policies priority 7: ['R1_R15_STRICT_IPV4', 'R1_R16_LOOSE_ANYCAST_IPV6', 'R1_R16_LOOSE_ANYCAST_IPV4', '5 more policies']

TD1#show topology id 102

Topology information

---

ISIS links

0008.0008.0008.00 [E][L2][I102][N[c65002][b0][s0008.0008.0008.00]][R[c65002][b0][s0012.0012.0012.00]][L[i10.100.12.8][n10.100.12.12][i2001:100:12::8][n2001:100:12::12]]

IGP metric: 10

TE metric: 500

Affinity: 0x2

Max-bw: 10000000000

Unrsv-bw priority 0: 10000000000

Unrsv-bw priority 1: 10000000000

Unrsv-bw priority 2: 10000000000

Unrsv-bw priority 3: 10000000000

Unrsv-bw priority 4: 10000000000

Unrsv-bw priority 5: 10000000000

Unrsv-bw priority 6: 10000000000

Unrsv-bw priority 7: 9400000000

Policies priority 0: []

Policies priority 1: []

Policies priority 2: []

Policies priority 3: []

Policies priority 4: []

Policies priority 5: []

Policies priority 6: []

Policies priority 7: ['R1_ISP3_YELLOW_IPV6_MIXED', 'R1_R16_LOOSE_ANYCAST_IPV6', 'R1_R16_LOOSE_ANYCAST_IPV4', '3 more policies']